Managing Proxy Failover and Redundancy

When scraping or automation pipelines start missing data, the root cause is often not access—it is recovery. A request fails, the system retries poorly, and costs rise while output drops. That is why a clear proxy failover strategy is critical.

What you’ll get here is a practical approach to designing failover and redundancy so your system keeps producing usable results under real-world conditions.

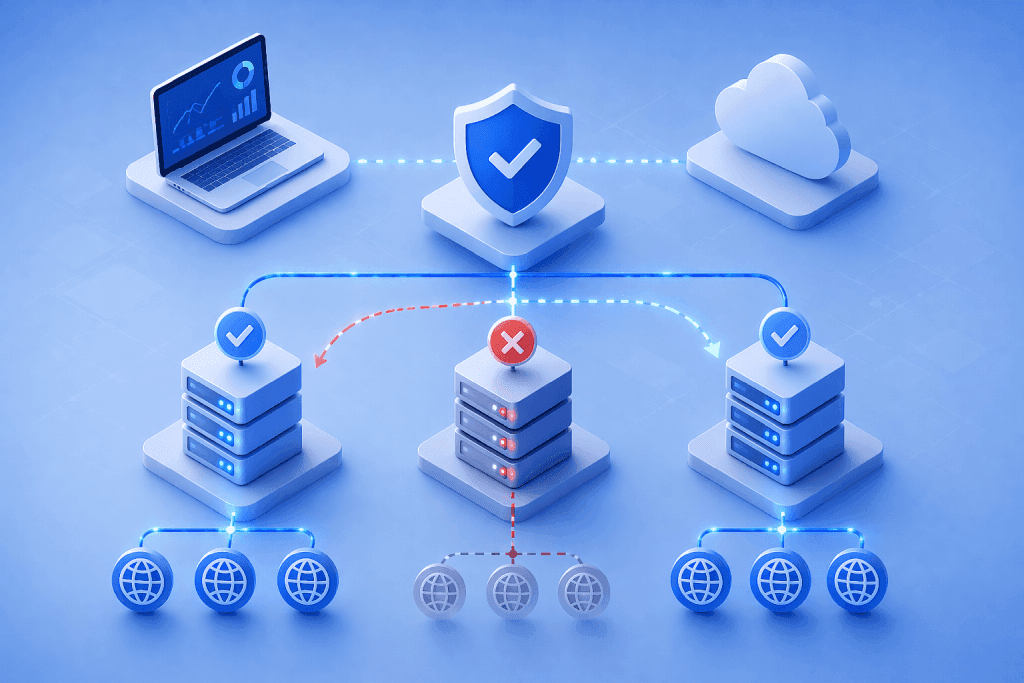

A proxy failover strategy defines how your system reacts to errors: when to retry, which proxy to switch to, when to change proxy type, and when to stop. Done well, it limits wasted requests, stabilizes sessions, and protects overall throughput.

Why failover design matters more at scale

At small scale, failures look random. At higher volume, patterns emerge.

Targets rate-limit bursts, block repeated IPs, or degrade responses under pressure. If your system reacts with blind retries, you amplify the problem. A structured failover layer turns those failures into controlled outcomes.

Across different proxy use cases, teams that treat failover as a first-class component consistently see better stability and lower cost per result.

What failover and redundancy actually control

A robust failover layer answers four questions for every failed request:

- Should this request be retried?

- Should it use the same proxy or a different one?

- Should it switch proxy type?

- When should the workflow stop?

Redundancy complements this by ensuring there are alternative routes available when one path fails.

In plain terms: failover decides what to do next; redundancy ensures there is a next option.

Common failure modes you need to plan for

Not all failures look the same, and each one needs a slightly different response.

- Rate limits (429): Too many requests in a short window

- Access blocks (403): Target has flagged the IP or pattern

- Timeouts: Network or target latency exceeds limits

- Soft blocks: CAPTCHA, challenge pages, or empty responses

- Session breaks: Login or navigation flow resets unexpectedly

Treating all of these with the same retry logic is one of the most common causes of inefficiency.

Core components of a proxy failover strategy

Error classification

Start by classifying failures into actionable categories.

For example:

- retryable with same proxy

- retryable with different proxy

- requires proxy type switch

- non-retryable (fail fast)

This prevents unnecessary retries and keeps the system responsive.

Retry policies with limits

Retries should be bounded and intentional.

Define:

- maximum retries per request

- delay or backoff windows

- escalation path (same proxy → new proxy → different proxy type)

In plain terms: retries should improve the chance of success, not just increase activity.

Proxy type fallback

Different proxy types handle friction differently.

A practical pattern is:

- start with datacenter proxies for speed and cost efficiency

- escalate to residential proxies when blocks or geo constraints appear

This preserves efficiency while still giving you a path to recover harder requests.

Health-aware routing

Failover should not treat all proxies equally.

Track signals such as:

- recent success rate

- latency trends

- block frequency

- retry depth

Then reduce traffic to weak proxies and favor healthier ones. This prevents cascading failures across the pool.

Redundancy across pools

Redundancy means having multiple proxy groups available for the same workload.

This can include:

- multiple subnets or IP ranges

- separate datacenter pools

- separate residential pools

- hybrid routing between types

If one pool degrades, traffic can shift without stopping the pipeline.

Designing a practical failover flow

A simple but effective flow often looks like this:

- Send request using primary proxy pool

- If failure occurs, classify the error

- Retry with adjusted timing or headers if appropriate

- Switch to a different proxy within the same pool

- Escalate to a different proxy type if needed

- Stop after defined retry limit

This layered approach prevents both over-retry and under-recovery.

When to switch proxy types

Switching proxy types too early increases cost. Switching too late increases failure rates.

Use signals such as:

- repeated 403 or challenge responses

- geo mismatch issues

- unstable sessions on protected endpoints

As a guideline, treat proxy type escalation as a targeted fallback, not a default path.

Real-world scenario: recovering blocked product requests

Imagine a system collecting product data across multiple sites. Category pages succeed on datacenter routes, but product pages occasionally return challenge responses.

A failover strategy detects the pattern and escalates only those requests to residential routes. The rest of the traffic stays on cheaper infrastructure. This keeps both success rates and costs under control.

Watch out for this

Unlimited retries

Retrying without limits can multiply cost without improving results.

Switching proxies without changing behavior

If request timing or patterns stay the same, simply changing IPs may not help.

No separation between failure types

Treating all failures as identical leads to inefficient recovery.

Lack of redundancy

If all traffic depends on one pool, a single issue can disrupt the entire pipeline.

Ignoring cost impact

Failover decisions should consider cost per successful result, not just raw success rate.

What to measure in a failover system

A proxy failover strategy should be evaluated using operational metrics.

Track:

- success rate after retry

- retry depth per request

- escalation rate to secondary pools

- latency impact of retries

- cost per successful response

A simple metric is:

CPSR = total request-related spend / successful responses

In plain terms: how much you paid for each usable result after accounting for retries.

This helps reveal whether failover is improving efficiency or just adding overhead.

Aligning failover with budget and scale

Failover decisions affect cost directly. Escalating too often to premium proxy types increases spend quickly.

It helps to align your strategy with available proxy plans and pricing and define clear thresholds for escalation. This keeps recovery controlled and predictable.

When to revisit your failover design

Review your setup when you see:

- rising retries without better success rates

- increased use of fallback proxy types

- longer task completion times

- unstable session-based workflows

- growing cost without increased output

These signals often point to misaligned retry rules or insufficient redundancy.

Frequently Asked Questions

What is a proxy failover strategy?

It is a set of rules that defines how your system reacts to request failures, including retries, proxy switching, and escalation paths.

How many retries should I allow per request?

There is no fixed number. It depends on the target and workload. Start with a small limit and adjust based on success rate and cost impact.

When should I switch from datacenter to residential proxies?

When you see repeated blocks, challenge pages, or geo-related issues that datacenter proxies cannot handle reliably.

Is redundancy always necessary?

For small systems, it may not be critical. For high-volume or business-critical pipelines, redundancy helps prevent single points of failure.

How do I know if failover is working?

If success rates improve without a large increase in retries or cost, the strategy is likely effective. Monitoring CPSR is a good indicator.

Where can I learn more about implementing proxy setups?

If you are building or refining your setup, the proxy tutorials section provides practical guidance for different environments.

Final thoughts

A strong proxy failover strategy is not about retrying everything. It is about recovering intelligently while protecting cost and stability.

Start by classifying failures, setting clear retry limits, and adding redundancy where it matters most. Then refine your approach based on real performance data, one layer at a time.

About the author

Elena Kovacs

Elena Kovacs works at the intersection of data strategy and proxy infrastructure. She designs scalable, geo-targeted data collection frameworks for SEO monitoring, market intelligence, and AI datasets. Her writing explores how proxy networks enable reliable, compliant data acquisition at scale.