Proxy Pool Architecture for High-Volume Data Collection

When a data collection system starts missing pages, burning through retries, or slowing down under load, the issue is often not the parser. It is the proxy layer. Weak routing, poor rotation logic, and unhealthy IPs can turn a fast crawler into an expensive one. That is why proxy pool architecture matters.

What you’ll get here is a practical guide to building a proxy pool that can support high-volume collection without losing stability, coverage, or cost control.

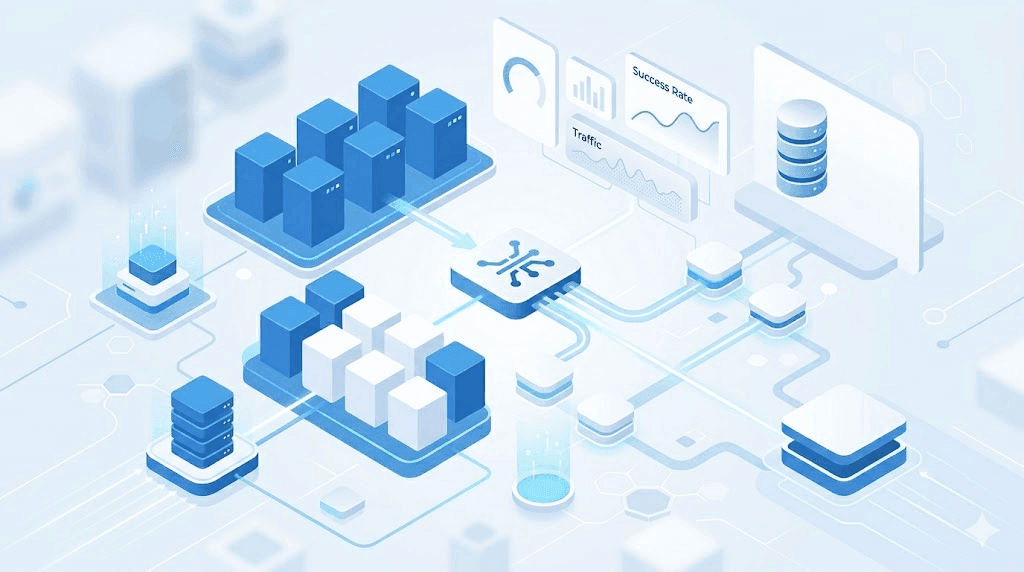

Proxy pool architecture is the system that organizes how proxies are grouped, selected, rotated, monitored, and replaced so a high-volume scraper can keep producing usable responses at scale.

Why proxy pools become a bottleneck before most teams expect it

A small scraping workflow can survive with a basic proxy list and simple rotation. A large one usually cannot. Once request volume rises, targets start responding differently. They rate-limit more aggressively, block repeated patterns, and punish unstable session behavior.

That shift turns proxies from a background utility into a core part of infrastructure. At that point, the real question is no longer “Which proxies do we have?” It becomes “How does the system decide which proxy to use, when to rotate, and when to stop trusting a route?”

If you look across different proxy use cases, that pattern shows up quickly. SEO monitoring, product extraction, login-based scraping, and market intelligence all put different pressure on the same pool.

What a high-volume proxy pool actually has to do

A good pool does more than spread traffic. It has to help the system stay efficient under changing target behavior.

At minimum, it should be able to:

- assign the right proxy to the right request

- rotate only when rotation helps more than it hurts

- preserve continuity when sessions matter

- detect weak proxies before they drag down the whole pipeline

- keep cost proportional to usable output

In plain terms: the job of a proxy pool is not just to hide requests. It is to keep request quality stable as traffic scales.

The main layers of proxy pool architecture

Inventory and segmentation

The first layer is supply. You need enough proxies, but just having a larger pool is not enough. The pool should be segmented by workload and target behavior.

A common pattern is to keep one group for fast, lower-friction traffic and another for protected or more sensitive traffic. In practice, that often means using datacenter proxies for bulk public requests and residential proxies for requests where trust, location, or session continuity matters more.

This split matters because a high-volume system becomes inefficient quickly when expensive proxy resources are wasted on easy traffic.

Routing logic

Routing decides which proxy handles which request.

A round-robin model may work at the start, but it usually becomes too blunt as workloads grow. Better systems route by domain, endpoint type, geography, or session requirement. That allows the pool to treat a public listing page differently from a checkout flow or an authenticated dashboard.

For systems built around web scraping proxies, this is where reliability often improves the most. Smart routing reduces wasted retries because traffic is matched to the right kind of proxy from the start.

Rotation policy

Rotation controls when an IP changes and when it remains stable.

There are three common models:

- per-request rotation for low-state traffic

- sticky sessions for workflows that need continuity

- adaptive rotation based on response quality, errors, or blocks

Too much rotation can break sessions and create unstable behavior. Too little can overexpose an IP and increase blocks. Good rotation is tied to target behavior, not to a fixed habit.

Health scoring

Every proxy should be treated like a changing resource, not a permanent asset.

Track signals such as:

- success rate

- response time

- block frequency

- retry count

- geo accuracy

Then score proxies or proxy groups based on those signals. Strong performers stay active. Weak ones are cooled down, deprioritized, or removed.

Without scoring, poor proxies stay in circulation too long and quietly lower success rates across the pool.

Failover rules

Failures are part of the job. What matters is whether the system responds intelligently.

A failover layer should define:

- when to retry

- whether to retry with the same proxy or a new one

- when to switch proxy type

- when to stop instead of wasting more requests

If these rules are missing, retries can turn into cost inflation very quickly.

How to design a pool that stays stable under volume

Step 1: classify the traffic first

Before deciding pool size or rotation intervals, classify the traffic.

Typical groups include:

- public and low-friction pages

- anonymous but paginated workflows

- login-dependent flows

- geo-sensitive content

- high-friction or high-value endpoints

This step is simple, but it changes everything. Once traffic is segmented by behavior, routing and rotation decisions become much more accurate.

Step 2: match proxy type to target friction

Use the least expensive setup that still clears the target reliably.

| Traffic pattern | Typical fit |

|---|---|

| Public pages and basic endpoints | Datacenter proxies |

| Login or stateful workflows | Residential proxies |

| Geo-sensitive requests | Residential proxies with location targeting |

| Mixed traffic across risk levels | Hybrid pool architecture |

This is also where budget planning becomes part of design. A pool should support the workload you actually expect, so it is worth comparing traffic segmentation against available proxy plans and pricing before scaling the system too far.

Step 3: define session behavior clearly

Not every request needs continuity. Some do.

For example:

- public search pages may tolerate frequent IP changes

- cart and quote flows often need sticky sessions

- login-based tasks usually need continuity plus slower pacing

If continuity matters and the system rotates too aggressively, the pool may look healthy on paper while the actual workflow keeps failing.

Step 4: decide retry behavior before production

A weak retry policy can destroy efficiency.

Set rules for:

- maximum retries per request

- delay or backoff windows

- block signals that trigger proxy replacement

- request types that should fail fast instead of looping

In plain terms: retries should be strategic, not emotional.

A practical model for high-volume pool design

For many teams, a strong baseline architecture looks like this:

- one datacenter pool for bulk, low-risk traffic

- one residential pool for protected or location-sensitive requests

- routing rules by domain or endpoint type

- health scoring updated continuously

- retry caps and automatic failover

This model is not the most complex possible system, but it is often the right place to start. It gives enough control to improve performance without making operations too heavy too early.

Real-world scenario: product data collection at scale

Imagine a team collecting product data across several major retail sites. Category pages and public listings may perform well on datacenter routes because they are easier to reach and cheaper to crawl.

But the moment the workflow touches inventory checks, protected pricing, or anti-bot-heavy pages, success rates may fall. A better design is often hybrid: keep low-friction traffic on datacenter routes and shift higher-friction endpoints to residential routes with tighter session control.

The gain is not just better access. It is fewer wasted attempts per usable result.

Watch out for this

Over-rotation

Changing IPs too often can break continuity and make legitimate-looking flows unstable.

Under-rotation

Leaving the same IP in place too long on a sensitive target can raise the chance of blocks.

Flat routing rules

If every target uses the same routing logic, the pool becomes inefficient quickly.

No health scoring

A pool without performance scoring keeps weak proxies alive too long.

Focusing only on proxy cost

Cheap traffic is not efficient if it produces poor success rates. Measure the cost of usable outcomes, not just the price of access.

What to measure once the pool is live

A production proxy pool should be evaluated like any other critical system.

Track:

- request success rate

- block rate by domain or route

- median and tail latency

- retry depth

- session completion rate

- cost per successful request

A simple formula is:

CPSR = total request-related spend / successful responses

In plain terms: how much you paid for each usable result.

That is often a better operating signal than raw proxy cost alone.

When to redesign the pool

You do not need a redesign every time a target changes, but certain signals suggest the current architecture is no longer enough.

Watch for:

- rising block rates even after pacing changes

- higher retries per successful request

- unstable session completion on key workflows

- repeated geo mismatch problems

- rising cost without a similar increase in output

If those patterns appear together, the architecture likely needs a deeper routing or segmentation update.

Frequently Asked Questions

What is proxy pool architecture in practical terms?

It is the system that manages how proxies are grouped, selected, rotated, monitored, and replaced during high-volume traffic. It turns a simple proxy list into a controllable part of infrastructure.

How many proxies do I need for high-volume data collection?

There is no single number that fits every workload. The right pool size depends on request volume, target friction, geography, and whether sessions need continuity. Pilot testing is usually more useful than guessing from traffic volume alone.

Should I use both datacenter and residential proxies in one pool?

In many cases, yes. Datacenter proxies often work well for lower-friction traffic, while residential proxies fit protected or location-sensitive requests better. A hybrid model gives more control over cost and reliability.

How do I know when a proxy should be removed from the pool?

If it shows repeated failures, slow response times, challenge pages, or poor geo consistency compared with the rest of the pool, it should be cooled down or deprioritized.

What is the most common mistake in proxy pool design?

Treating all traffic the same way. A single rule set for routing, retries, and rotation usually causes unnecessary failures as soon as the workload becomes more diverse.

Can proxy pool design affect cost directly?

Yes. Poor routing, weak retries, and unhealthy proxies increase the number of wasted requests. That raises the cost of producing each successful response.

Final thoughts

A strong proxy pool architecture is not about owning the largest pool. It is about matching proxy types to traffic, preserving continuity where it matters, and using feedback to improve routing over time.

If your system is growing, start by classifying the workload and measuring where the pool is leaking efficiency. From there, improve routing, scoring, and failover one layer at a time.

That is how a proxy pool becomes infrastructure instead of just a list of IPs.

About the author

Daniel Mercer

Daniel Mercer designs and maintains high-availability proxy networks optimized for uptime, latency, and scalability. With over a decade of experience in network architecture and IP infrastructure, he focuses on routing efficiency, proxy rotation systems, and performance optimization under high-concurrency workloads. At SquidProxies, Daniel writes about building resilient proxy environments for production use.