Quick Links

- Billing Account

- Proxy Account

- Contact Support

- Apply as an Affiliate / Reseller

- Blogs and Tutorials

Choosing the best scraping proxies can be an overwhelming task. There are plenty of providers and a wide variety of options. So how do we select the most suitable proxy for our project? In this guide, we have covered some area points to help you scrape successfully.

Millions of data are available online. However, not all these data are accessible. Serious marketers understand how significant data gathering is. The right data plays a vital role in achieving KPIs and target goals. It is the reason marketers go the extra mile to accumulate necessary information. It is where scrapers come in. Scrapers are proven to be an essential ally in data collection. The best tool can thoroughly comb through a website and extract information. Even large enterprises use web scrapers. So why do others shudder at the words "web scraping"?

Web scraping is a controversial topic. As a result, some marketers are hesitant to make it part of their data collection efforts. But, how can web scraping help a business succeed?

The benefits outweigh the potential risks of web scraping. Anyone who plans to perform scraping activities can easily avoid these risks. But how? The answer: Proxies. It is a critical element to help scrapers succeed.

Proxies have a wide variety of applications. Because of its versatile features, it can bring various advantages to any activity.

The primary purpose of proxies is to conceal the location and IP source. It allows users to send web requests without exposing their actual information. The ability to change locations when surfing the internet can help users access geo-specific content. Through this feature, users can gather information from a target without requiring a physical presence in the area. It enables brands to monitor how they perform in the targeted region. By understanding their market position, their brands improve as a whole. Proxies can fetch data that are under blanket bans. Since proxies can bypass content and geo-restrictions, they can easily access pages hidden from the standard view.

Using proxies also helps maximize a scrapers’ output. It reduces block rates. Without proxies, web scraping is minimal. Proxies surpass "crawl rates" which allow spiders to collect more data. Crawl rates refer to the number of requests allowed in a given timeframe. This rate differs on every website.

Web requests routed through proxies appear from separate sources. Thus, successfully surpassing the limitations set by a website’s anti-bot. Furthermore, proxies help protect the user’s original IP address. If a website determines a bot activity, the actual IP address will not be penalized. By proxying the sources, a higher scraping success rate is ensured.

Proxies are indispensable when scraping the web. While spiders are effective in data collection, they can only perform their best when paired with a suitable proxy.

Choosing between private and shared proxies depends on your project requirements. If your project needs high performance and maximized connection, private proxies are best. For smaller-scale projects with a limited budget, shared proxies perform competently.

Free proxies for scraping are generally discouraged. Aside from its questionable reliability, users also risk contaminating their devices with malware. They are also often used as vessels for illegal activities. It is because free proxies are open to the public.

Aside from choosing proxies based on exclusivity, users should also identify the IP sources. Proxy servers have three categories:

These are the cheapest proxies. Datacenter IPs are created on independent servers. It is often the most practical proxies for data scraping. With its speed and a competitive tool, users can efficiently complete large-scale scraping projects. Additionally, these proxies do not raise legal concerns over IP acquisition. Unlike residential or mobile proxies, datacenter IPs do not have a third-party affiliation.

Residential proxies are mostly rotating, while ISP proxies are static. Since they are affiliated with third parties, these proxies can be hard to obtain. Thus, making the cost more expensive. In most cases, these proxies could give the same result as datacenter IPs. But DC proxies come with a much lower price.

These proxies are the hardest to obtain and the most expensive. They are great to use if the scraper needs to collect data that is only visible on mobile devices.

Almost every website can be a target for web scraping. It is why websites implement anti-bot systems. When these bots determine scraping activities, it immediately imposes an IP ban. Depending on the server’s setup, it can either ban a specific IP or the whole range of IP addresses. As discussed above, proxies enable users to route the request to different sources. Through this process, websites see multiple users instead of one IP source.

When choosing the best proxy for scraping google and other websites, consider the number of API calls or requests you need. This number will determine how large the proxy pool should be. The exclusivity of the proxy will also depend on the target website. If the target requires a clean IP history, private proxies are the ideal choice. The proxies should also be compatible with your crawler or scraper. It will help produce optimal results. Additionally, each proxy should have a fast load time. Websites can easily detect a slow proxy.

Each scraping tool has a proxy management setup. Although most of these tools have the same function, they offer unique features. For example, some scrapers provide proxies while others allow users to load their own proxies. It can be added individually or uploaded as a proxy list.

Some scrapers also allow automatic IP rotation. Enabling this feature depends on a project’s target. If the target website requires login, rotating proxies are not always ideal. When a proxy jumps to a realistically impossible location, it may trigger an anti-bot feature and block the operation.

Before starting any scraping activity, test the tool. Understand its function and terms. Read and watch available documentations. Start with small projects before scaling up. Identify what the scraper can and cannot do. Then, customize the scraper’s settings according to the project requirement.

To successfully scrape with proxies, make your requests appear as human as possible. Ensure that the proxies are compatible with the scraper. It should have a stable connection and reliable speed.

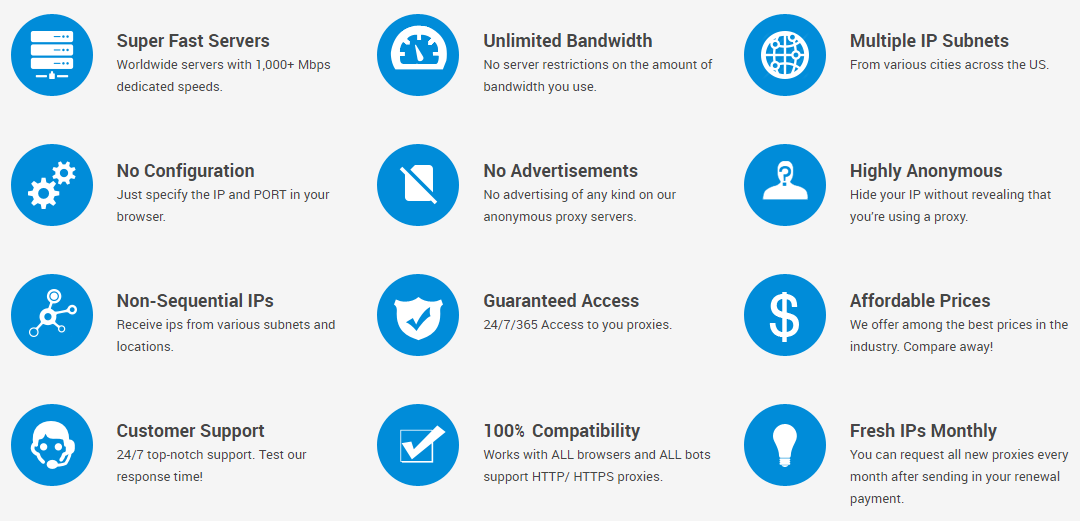

Squidproxies offer proxies with guaranteed elite anonymity. Easily choose from our pool of private and shared proxies anytime. As an added feature, you can request an all-new proxy pool every month for free! Never worry about running out of proxies when scraping.

Start with 10 private proxies if you're unsure.

We accept PayPal, Credit card, Bitcoin payments.

Log in to your proxy control panel and get started.